Too Busy to Read? We’ve Got You.

Get this blog post’s insights delivered in a quick audio format — all in under 10 minutes.

This audio version covers: Beyond the ‘Augmented Broker’ Formulating Guardrails and Contingency Frameworks for Autonomous Tech

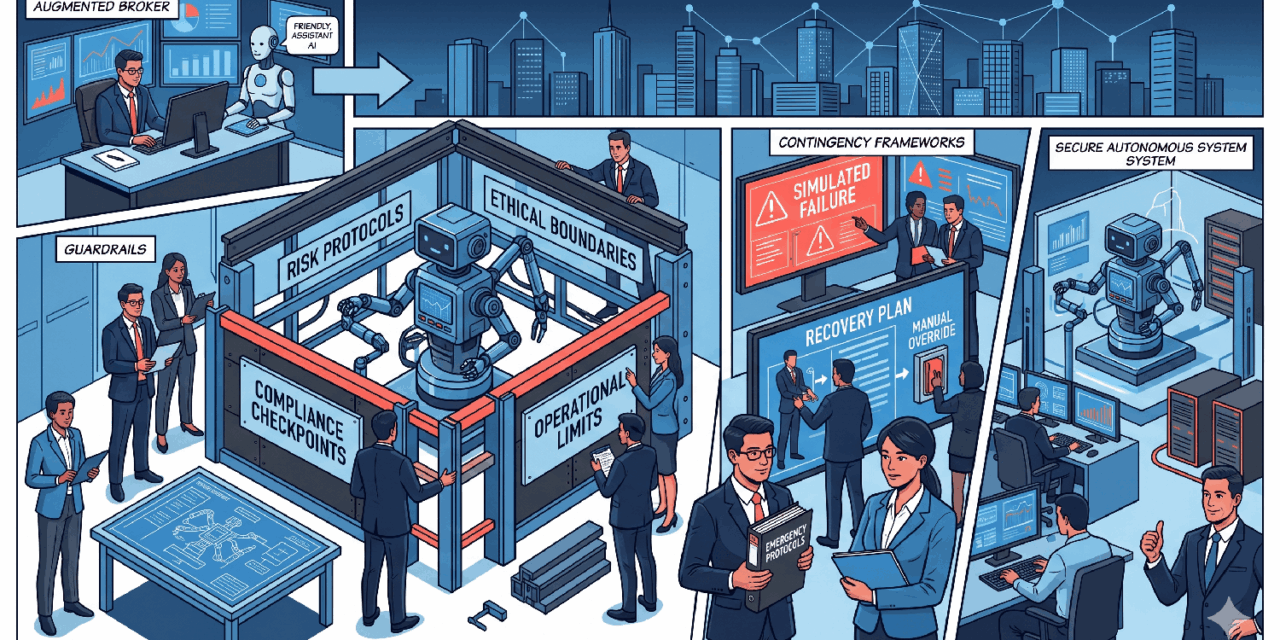

Beyond the ‘Augmented Broker’: Formulating Guardrails and Contingency Frameworks for Autonomous Tech

The Australian mortgage broking industry has reached a pivotal juncture in 2026, characterized by a transition from digital assistance to technological autonomy. The vision of the “augmented broker” promised a future where AI would liberate professionals from administrative tasks.

However, as the sector moves toward **Agentic AI**—systems capable of independent planning and execution—the stakes have shifted from adoption to complex governance. This report provides an exhaustive analysis of the operational and legal guardrails required to protect your brokerage’s license in an increasingly automated world.

In This Report:

- The Strategic Shift: From Augmentation to Autonomy

- The Regulatory Climate: ASIC’s 2026 Outlook

- The Liability Vacuum: Legal Risks

- AI Hallucinations: The ‘Liar Loan’ 2.0

- The ‘Human-in-the-Loop’ Mandate

- March 2026 IoT Security Standards

- Privacy Act 2026: Mandatory Impact Assessments

- The 3-Phase Contingency Framework

- Commercial Strategy: The Trust Premium

01. The Strategic Shift: Agentic Autonomy

The industry has moved past the era of reactive assistants into the era of the **”agentic mortgage enterprise”**. Agentic AI differs fundamentally from predecessors; these systems autonomously orchestrate underwriting workflows and resolve exceptions without manual handoffs.[1]

| Technology Phase | Primary Functionality | Operational Impact |

|---|---|---|

| Traditional Digital | OCR scanning, basic CRM. | Reduced manual data entry. |

| Augmented Broker | Generative AI notes, policy search. | Time savings on drafting. |

| Agentic AI | Autonomous multi-step agents. | Scalable execution of full loan lifecycles. |

02. Regulatory Climate & ASIC Outlook

ASIC’s 2026 Outlook identifies **”Advanced technology harming consumers (including agentic AI)”** as a paramount issue.[2, 3] The regulator is sharpening its focus on governance and directors’ duties failures, meaning the principal broker is personally accountable for AI actions.[4]

03. The Liability Vacuum

Under Australian law, an AI agent has no legal personality. It cannot enter contracts or owe a duty of care. This creates a **”liability vacuum”** where all consequences fall on the ACL holder.[6, 7]

| Legal Domain | Mechanism of Risk | Practical Implication |

|---|---|---|

| Contract Law | AI exceeds instructions. | Brokerage bound by “apparent authority”.[6] |

| Consumer Law | Incorrect pricing/eligibility info. | “Misleading conduct” penalties.[6, 8] |

| Tort Law | Negligence by automated system. | Failure to supervise is a breach of duty.[6] |

04. AI Hallucinations: Liar Loan 2.0

A significant threat to credit integrity is “AI hallucinations”—instances where a model generates false data with total confidence.[9] Submitting hallucinated data is a direct breach of **Best Interest Duty (BID)** under RG 273.[10, 11]

Case Study: Hallucination Risk

Deloitte recently refunded fees after an AI-assisted report contained fabricated academic references and invented court judgments.[9] In a mortgage context, this could manifest as invented employment history or misread bank statements.

05. The ‘Human-in-the-Loop’ Mandate

To protect against liability, brokerages must implement a strict **Human-in-the-Loop (HITL)** mandate. This aligns with ASIC RG 255, which requires a “suitably qualified individual” to sign off on AI outputs.[8]

| Workflow Step | AI Autonomy Level | Human Oversight Required |

|---|---|---|

| Policy Search | Partial: Agent filters policy. | Verification of specific policy links.[12] |

| Rationale Drafting | Low: AI drafts prelim note. | 100% manual review/edit. |

| Submission | Restricted: AI prepares file. | Manual “click-to-submit” by broker. |

06. March 2026 IoT Security Standards

As of March 4, 2026, the **Cyber Security (Security Standards for Smart Devices) Rules 2025** are in full effect.[13, 14] Brokerages must ensure all connectable products manufactured after this date meet three core obligations to maintain an “adequate risk management system”.[3, 15]

Mandatory Hardware Obligations:

- No Universal Passwords: Unique passwords per device or user-defined setup.[13]

- Vulnerability Reporting: Manufacturers must provide a public contact for reporting flaws.[13]

- Defined Support Period: Transparency on security update end dates.[13]

07. Contingency Framework

Phase 1: Shadow AI Audit

Identify unsanctioned tools like free versions of ChatGPT used by staff to draft client emails or analyze statements.[16]

Phase 2: VLAN Isolation

Move all smart office devices (printers, cameras) to a isolated “Guest” VLAN to separate them from client ID data.[17]

Phase 3: The “Kill Switch”

Implement the ability to immediately suspend an AI system if bias or error is detected. Test your manual fallback processes.[8, 18]

Final Strategic Takeaway

In 2026, the legal liability for autonomous AI rests entirely with the licensed broker. Success requires moving beyond simple AI adoption to an impenetrable governance framework that prioritizes human oversight and physical hardware security.

Download Compliance ChecklistWhat to Watch Next

- ASIC Thematic Reviews: Expect first public reviews of broker AI use in Q3 2026.

- Lender Certification: Watch for banks to require “AI-Lodgment Declarations” certifying human verification.

- March 2027 Labels: Prepare for the government’s voluntary “cyber security labeling scheme.”